RealSense Skeletal Tracking

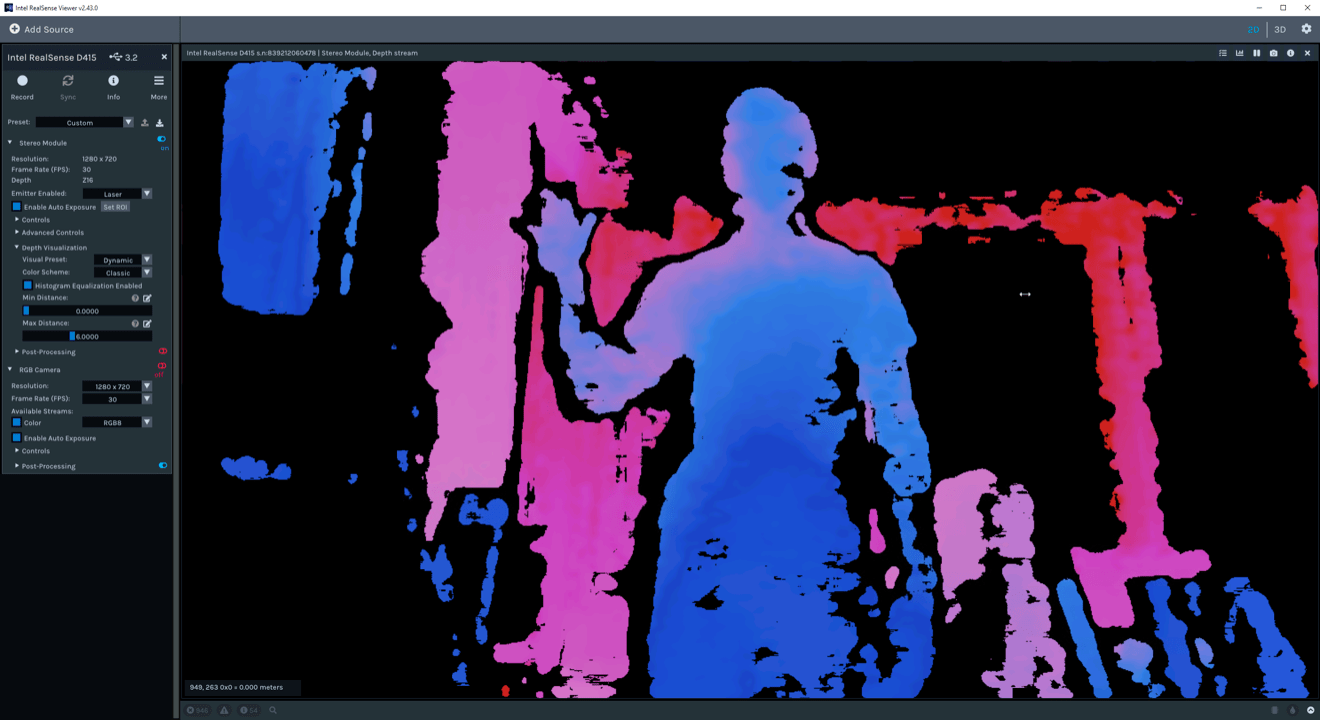

This is the Intel RealSense D415 depth camera.

I tinkered with one of these when I worked on MixCast. We used it for background removal without the need for a green screen. It’s a nifty device. It sports a regular RGB camera but also tells you how far away objects are via… lasers? I don’t understand how it works. It’s like the Xbox Kinect when that was a thing.1

On a whim I tried it for skeletal tracking. I first gave Intel’s officially sanctioned SDK a whirl. No luck. Next I tried Nuitrack. Much better. I was able to open their Unity project and wire it up to an armature with minimal effort. I used characters from The Realtime Rascals, assets that Unity built to demo realtime filmmaking.2

You don’t need a fancy doodad like a RealSense for skeletal tracking. The flagship iPhones and iPads boast Lidar sensors, so you might already own a capable depth camera. Even better, check out this tease for no-fuss facial and skeletal tracking in Adobe Character Animator using just a webcam. The future is here.

Last year Microsoft resurrected the Kinect under the Azure banner. It looks like a promising alternative to the RealSense if you need this sort of device. ↩

This is the real reason I dug out my RealSense, to make that frog dance. ↩